Machine Culture is a Substack on AI governance and emergent order.

I publish 2-3x each week, writing about:

Compute governance⚡

AI, Congress, and the federal bureaucracy 🤖

Great power competition 🌐

Industrial policy and central planning 🏭

Culture, cognition, and epistemology 🧬

Organized in these segments:

— Deep Tracks — Long essays, transcripts, and research 📻

— Field Notes — Short posts 💭

— Model Convos — Micro interviews 🗪

— Paperweights — Links with commentary 🗐

— Other Minds — Guest authors 🧠

If you want to know about me and why I write, keep scrolling…

Background: The title of this project is taken directly from an eponymous academic article on “culture mediated or generated by machines.”

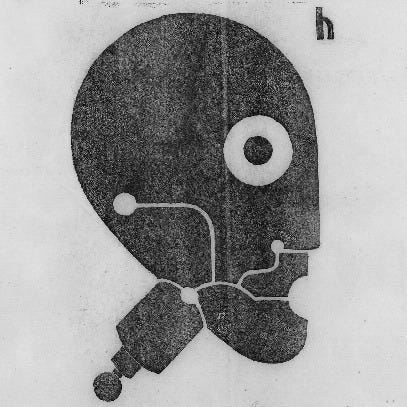

The logo is a public domain work of art created by the German artist Heinrich Hoerle (1895–1936). The title — “Prosthetic Head” — is, in my mind, a straightforward metaphor for the promise and peril of AI in our extended cognition.

The concept of “emergent” or “spontaneous” order was articulated last century by the economist F.A. Hayek and the philosopher Michael Polanyi, among others. Of course, the concept has much deeper roots, a web of inquiry stretching from Aristotle to Darwin.

However, the Scottish philosopher Adam Ferguson probably offered the best short description of the phenomenon in his 1767 work An Essay on the History of Civil Society, in which he referred to outcomes that were “the result of human action, but not the execution of any human design.”

For our purposes, we should modify Ferguson’s statement to include “human-machine action” as the agentic boundaries between the two have become ambiguous.

Many AI policy proposals originating out of DC, London, Brussels, Silicon Valley, and Beijing all seem to have one major thing in common — a great faith in the power of human reason, foreknowledge, and planning.

I think that’s deeply misguided — something I outline in my “Thesis & Prospectus” launch essay.

This intentionally extremely hypertextual first-person notebook is an attempt to explain — however incompletely — the pitfalls of central planning in science and technology.

Author: I (Ryan Hauser) am a Research Fellow at the Mercatus Center at George Mason University.

My research focuses on the governance of emerging technologies, particularly artificial intelligence, and the intersection of science, policy, and society.

I am an alumnus of the Frédéric Bastiat Fellowship and the Diverse Intelligences Summer Institute.

Prior to joining Mercatus, I worked as a writer and editor in Washington, DC, including stints with MNI, Blue Heron Research Partners, the Scientific Labs Management Project, and ChinaTalk.

I am currently pursuing graduate studies in the Department of Science, Technology, and Society at Virginia Tech (my home program) and the Department of Economics at George Mason University.

Broadly, I see myself working at the crossroads of PPE (philosophy, politics, and economics) and HPS (history, philosophy, and social studies of science).

My academic work centers on interdisciplinary questions related to risk, rationality, and norms in science and technology.

My commentary has appeared in the Associated Press, Inside AI Policy, ChinaTalk, Decrypt, and Reason.

I am a stalwart devotee of the band Champs, an indie pop, alt-folk fraternal duo from the Isle of Wight, a place I have never gone.

To contact me, please don’t hesitate to send an email.