Model Convo: Kurtis Hingl

On the Growth of Knowledge, Scaling AI Science, and Saint Leibowitz

Welcome back to the Model Convo series —— micro interviews with researchers in AI governance and adjacent fields.

This week’s convo is with Kurtis Hingl, a PhD Fellow at the Mercatus Center at George Mason University and an incoming Teaching Assistant Professor of General Business at West Virginia University and affiliate with the Kendrick Center for an Ethical Economy.

He writes at The Hunchbox and Price Theory Palooza here on Substack.

What was your path into AI and metascience, and what are you working on now?

I studied economics, math, and philosophy for my undergraduate degree —— a pretty typical path —— and I went to economics graduate school to study industrial organization, the competitive processes of markets and industries.

I quickly found myself asking more and more questions about the industry of scientific research itself, whether from my lack of creativity or because I was thinking about recursion from reading Gödel, Escher, Bach. I’m not sure. But I started applying the language and logic of markets and social systems to science, and I haven’t stopped.

Of course I was reading a lot of Hayek, Polanyi, and Popper on the social nature of scientific knowledge and its role in a free society, and then I also read Joseph Henrich’s The Secret to Our Success early in grad school.

In my opinion, once you start thinking about the growth of knowledge, it’s hard to think about much anything else —— to paraphrase the Robert Lucas quote passed down to his student Paul Romer. At the same time, I’ve always been more comfortable with the microeconomic questions of incentives, property rights, and organizational structure, so that’s where I have pushed my focus within the broader metascience ecosystem.

My current work examines how scientific fields differ in their organization, in part due to the “testability” of the subject matter. But I am also trying to keep up with all the news in AI, think about how it relates to science, and incorporate it into my own research as much as possible.

AI is the most interesting thing to wrestle with in every facet of my work: science, market processes, education, you name it. It is impossible for a young economist to not be interested in AI. Actually, it’s impossible for any awake and curious person to not be interested in AI.

What works of art have most shaped your views on AI and metascience?

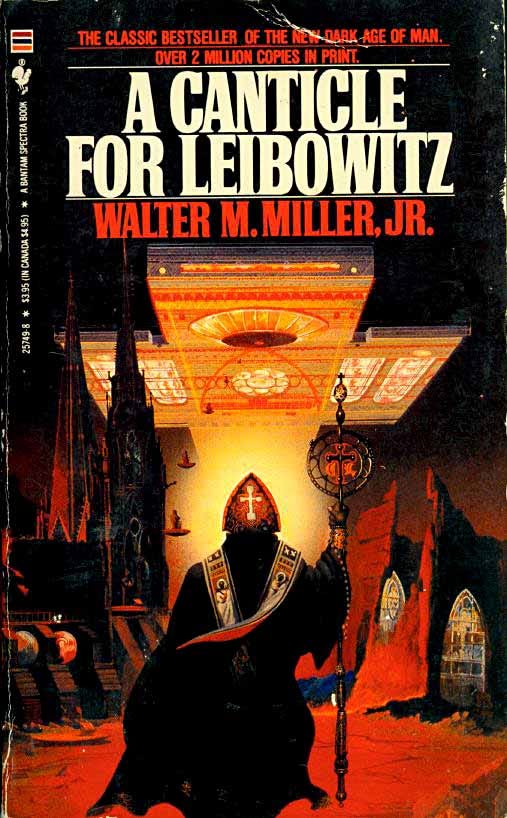

A cliché for sure, but no doubt A Canticle for Leibowitz. How is dispersed knowledge valued and preserved? Who gets to decide what is worth keeping from the past? What’s the value of the thinker, the tinkerer, or the mere copy-cat? Why shouldn’t we all just focus on the immediate practical concerns around us? Can the thinker also be practical? Those are not even the main questions Miller wrestles with, but a few that come to mind that have infiltrated my thought. And there’s also the great public radio audio drama.

On AI, I can’t say that there’s been a major work of art that’s influenced me, probably to my own detriment. I can say that I spent a lot of time growing up working in landscaping and excavating, a lot of time camping and backpacking, and a little bit of time working in manufacturing.

It wasn’t that weird, then, when we all started to talk to LLMs. I’ve been communicating with technology deliberately all of my life. Embodied knowledge as an extension of the self is nothing new in the human condition, and yes there is often a conversation going on, if not in English. Maybe the right answer is the scene in Anna Karenina where Levin learns to mow his fields.

What’s your most contrarian take on AI and metascience?

Science might get cheaper, but the frontier of science will only get more expensive and will be directed by elite scientists, and that’s okay. But this is contrarian more in mood than in fact ——many people anticipate concentration of power but also think it’s very bad.

AI greatly increases the productivity and output of the everyman, but it does so at higher rates for the elites. What we really care about is the big breakthroughs on the frontier of knowledge, and these will happen in the partnerships between leading AI labs and leading scientists, not from the masses that have also leveled up. That is okay, good in fact.

What this means for metascience is that the big gains are from bargaining with the big players, not overhauling the system or going renegade trying to build a new system. As much as I sometimes hold the common disdain for the layers of institutional status quo, they will continue to dominate.

Academic publishing, the PI-lab-grant-funding situationship, and the concentration of prestige among a small number of incumbent institutions are here to stay. Again this is okay! We don’t have to give up on the hope for building better science. It’s just that it is more likely to come via convincing the wielders of power than by overthrowing them. We will need to be more Coasean.

I guess predicting the continuation of the status quo isn’t that contrarian, but it sometimes seems like it in a community always thinking about progress. And on concentration, Average is Over is already a well-established point, so let me give a hopeful caveat where there are still huge gains from leveling up the masses.

Imagine if it became a norm for, say, the city council of a small town to run projections —— GDP, population, traffic —— to help decide if that bike lane should go in. I’d like to think this moves us to better equilibria than the evidenceless persuasion running most public and private decision making.

There are versions of our future where this kind of marginal improvement in governance will lead to large and surprising effects. A cure for cancer might be just as likely to come from an innovation in rules around “approval” than a hi-fi whole-cell simulator.

What are you reading, watching, or listening to now?

I noticed it’s come up a couple times in these conversations, but yes, I am coincidentally reading East of Eden. Surprisingly relevant, especially the importance of naming things. Much of life (and science) is about naming things, as it is Adam’s task in the Genesis narrative.

In economics, we sometimes talk about “identification”—giving a claim an identity by carefully distinguishing it from the relevant counterfactual. In my household, my toddler is just learning to speak. His task, like Adam’s (both in Genesis and in Steinbeck) is to name things. In public discourse, much talk is, “Is that thing really an X or is it a Y?”

I’m always reading new econ papers, reading Twitter and Substack to keep up with AI, and always trying to poke through something non-fiction that’s bigger and deeper. Right now that’s Montesquieu’s The Spirit of the Laws.

I’m always listening to some combination of mewithoutYou, The Mountain Goats, and bluegrass. Also, Willow’s new album is quite good, though I don’t know what it’s about to be honest.

I don’t watch much of anything. There’s a couple YouTube series of runners preparing for Boston I was following, and analysis videos from the Candidates while that was happening.

Go-to emerging science / tech music track?

OK Computer is a classic, though like a lot of the music I end up loving, I don’t fully identify with the message. How about Pete Seeger’s “We Shall Overcome”?