The Perma-Twilight of Our Youth

Will AI extend our adolescence? It's a solid maybe...

One of the many compelling parts of There Will Be Blood is the high degree of agency and economic activity given to the film’s young characters. From Daniel Plainview’s adopted son H.W. to the doomed Eli Sunday, we see males under the age of 18 playing a major role in the organization of political and economic life. Opportunities for women, of course, were more constrained. As far as the film goes, the results of this youthful involvement are mixed, owing more to the fallen nature of each character rather than their age, sex, or religion. Still, the West was not settled by the old and propertied, but by the young and landless.

While Paul Thomas Anderson’s 2007 movie is fictional, it depicts one aspect of our species that has existed across cultures for millennia. Humans without fully developed prefrontal cortices have historically been granted a much wider degree of freedom and responsibility. That all started to shift considerably, however, in the wake of the Industrial Revolution — or what Deirdre McCloskey calls “The Great Enrichment.” Now, the emergence of new, transformative varieties of artificial intelligence might accelerate that trend. Or reverse it. And what you think about either outcome probably has a lot to do with your core epistemological and metaphysical views.

Let’s start with the ethnographic data. Anthropologists and historians have given us plenty of detailed accounts on the relative agency granted to individuals under 18 throughout history. Ulysses S. Grant, for example, ran what amounts to an occasional horse-drawn carriage taxi service throughout Ohio, carting adults along muddy roads and across engorged creeks all at the age of twelve. During that same period, Hetty Green became the bookkeeper for her father’s whaling business when she was just thirteen. She later became the “Queen of Wall Street” and was perhaps the world’s richest woman by the time of her death in 1916. Stories of this nature are abundant and may cause some embarrassment for hovering parents and timid offspring alike.

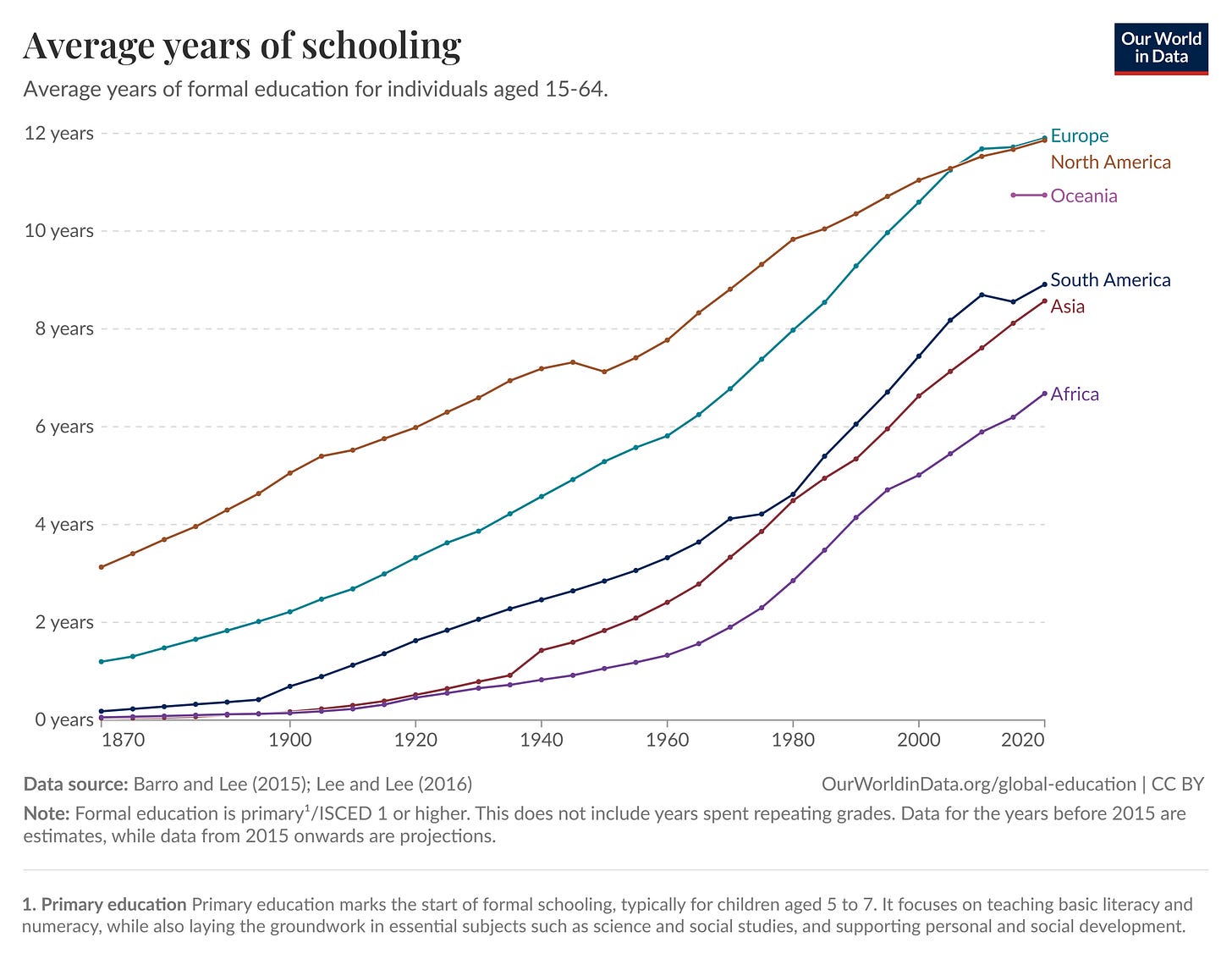

In terms of cultural evolution, however, these high-agency childhood norms should not surprise us. They are the norm globally and historically. What is strange is the emergence of protracted adolescence and the delay of work and reproduction. Humans are now staying in school longer and having children later. In the US, for example, 4.3 years of schooling was the average level attained by individuals in 1870 between the ages of 15 and 64 years. Today, that number is 13.3 years as of 2020. Similarly, the mean age of US mothers at first birth climbed from 21.4 in 1970 to 27.5 in 2023. Other countries mirror these trends. The US is hardly unique.

The shift is better expressed concretely. A nomadic hunter-gatherer of 12 years of age could be expected to engage in a wide range of economic activities — harvesting, preparing food, hunting game, scouting, surveillance, and (tragically) even warfare. Today, a young Bostonian is expected to undergo at least 12 years of formal education, ideally 16, and possibly as high as 24 years if she wishes to become a doctor or professor. We have been living in a period where the return on this extraordinary kind of individual investment is taken for granted. The returns to this level of extended human capital formation have been enormous.

We should not be too surprised by these trends. It is reasonable to expect a larger, richer, more socially complex and more specialized population to extend childhood and adolescence. This is namely because the return on child labor decreases as the return on human capital formation increases. When groups are small — whether it’s a small group of pre-Columbian hunter-gatherers or the even smaller prairie homestead depicted by Laura Ingalls Wilder — everyone must pitch in as soon as they are able. The group is too small, too fragile to do otherwise.

But as groups grow and integrate into larger social networks, individuals can finally breathe and think about the long run. Younger individuals are required less and less to become immediate contributors to social welfare. They move from being a net cost to a net benefit. But the paradoxical result is that we end up in a world that feels like adulthood is always pushed further back.

This move toward extended adolescence and more formal education wasn’t purely market driven. As Claudia Goldin notes, compulsory schooling and laws against child labor were also major drivers of these trends. (Emergent orders include inputs from states too, after all.) The result was a kind of positive feedback loop that radically restructured work in our industrial society.

How might artificial general intelligence (AGI) — or at least transformative AI — affect this trend? To my mind, the effects of this technological shock are not straightforward and might unleash competing dynamics.

The argument that AGI will extend adolescence is the safest one to make. As the psychologist and forecasting expert Phil Tetlock notes, “Simple extrapolation algorithms, historically, are hard to beat.” If technological development and economic growth have ushered in more education and later reproductive ages historically — especially over the last 200 years or so — then we should bet on the continuation of this macro trend. Under these conditions, the transformative nature of AI would be to radically accelerate the ex ante trend toward deeper adolescence.

Along these lines, as the economy becomes ever more complex, it will likely be necessary to spend even greater amounts of time undergoing formal education, just to get ahead or stay afloat. That would hold true even if the value of education is (or always has been) primarily social — more about status and the imitation of prestige rather than gaining “hard skills.” To put it crudely, if the robots are doing everything for us — assuming we all get along just fine — we’re going to get to spend a lot of time figuring out what else we can and should be doing. If that sounds a lot like being a confused and frustrated teenager, unsure of where to go or who to become… Well, that’s because it is.

But how might an AGI shock shorten adolescence? One way in which AGI might partly dam the fountain of youth is by lowering the return on human capital formation for any task that can be automated. If you spent years of your life learning to code, so the argument goes, you might expect the diffusion of Claude Code to undo a lot of your effort. If your plan was to work as a human computer — like the number-crunching kind brilliantly depicted in the book and film Hidden Figures — then you will likely be displeased to hear about the radical reduction in job listings for human computers. The advent of the semiconductor and the diffusion of mainframes from International Business Machines has made your skills more widely accessible to those who need them. The case that you should stay in school longer diminishes.

The shock of this new world means that a smart, determined individual — like a Plainview or an H.W. — can start working immediately without great amounts of formal education. Drive, gumption, and monomaniacal focus come back into play, to say nothing of their moral desirability. As Revana Sharfuddin — a labor economist and fellow Mercatian — argues:

[T]he unique promise of AI lies in its ability to… broaden decision-making beyond a narrow elite. By combining information, explicit rules, and learned experience, it can amplify human judgment and enable workers with basic training to take on tasks once reserved for doctors, lawyers, or engineers. Rather than replacing expertise, AI can democratize it — revitalizing the middle-skill, middle-class core hollowed out by automation and globalization.

However, a core reason — perhaps the core reason — that makes modelling these scenarios difficult is because we do not know the cognitive and mechanical limits of tacit knowledge. If you are inclined to think all tacit knowledge is just social, sensory, and other forms of data waiting to be captured and analyzed, then you should bet on a deepening adolescence that may, if we are not careful, extend to a high degree of disempowerment. The AIs may not become our predators so much as our parents.

But if you think tacit knowledge really is tacit for humans and computers alike — that there are domains in which “we can know more than we can tell” in ways that are illegible to ourselves and to our machines — then you might think there is some hope for reducing our adolescence and refiring our agentic engines. Maybe the next Ulysses S. Grant will be running a Waymo auxiliary business? Or maybe the next Hetty Green will be using Claude Code to run her own at-home crypto hedge fund?

These cautiously optimistic outcomes, however, probably require a rejection of physicalism. And that is fine by me, though the case remains to be made.