Paperweights, 4th Ed.

Chip Tariffs, Tacit Knowledge, and AI Parties

I. US and Taiwan Reach Trade Deal, with Semiconductor Chips and China in Focus

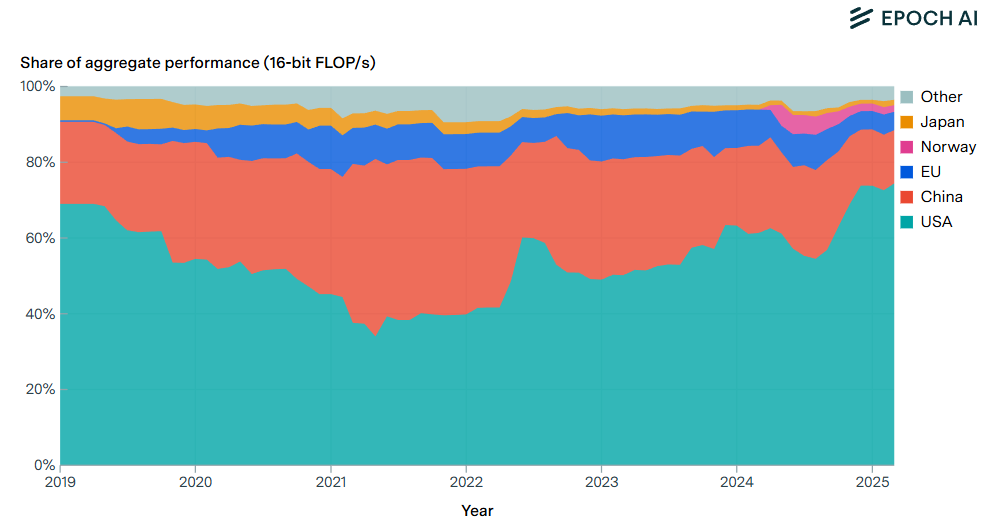

The US and Taiwan “clinched a trade deal on [January 15th] that cuts tariffs on many of the semiconductor powerhouse’s exports, directs new investments in the US technology industry and risks infuriating China.” Chip firms like TSMC got some major carveouts, but general tariffs on most exports from Taiwan to the US still carry a 15 percent tax, down from 20 percent.

This move comes one day after the Trump admin also placed 25 percent tariffs on select advanced chips — again with major carveouts, but this time for startups, consumer devices, and US data centers. The White House says more chip tariffs are coming.

That means the US now taxes Nvidia H200 chip imports (again, with major exceptions) while effectively taxing recently approved H200 exports to China. H200s must now enter the US for certification before they can be sent back across the Pacific, which means they get the 25 percent tariff while avoiding the unconstitutional taxation of US exports.

If this seems like a dubious way to limit the AI capabilities of China while boosting the capabilities of the US, that’s because that is not the aim of the current administration. Instead, we have a mercantilist Rube Goldberg machine that aims to expand sales for US chip and AI firms, restrict competitors, and give the US government a major cut while doing it.

This is consistent with the recent National Security Strategy published last November/December. That document — unlike last summer’s White House publication, America’s AI Action Plan — makes no mention of maintaining the US lead in advanced compute or denying adversaries access to advanced chips. Rather, the NSS centers reshoring and protectionism writ large — arguably at the expense of both American growth and national security.

II. American AI Exports Program Comments: Accelerator-Level Confidential Computing for Secure AI Exports

Speaking of chip exports, my indefatigable colleague Elsie Jang wrote a public interest comment last month for the US Department of Commerce’s RFI on “questions relating to the American AI Exports Program.” Here’s the bottom line up front:

The core recommendation is that when evaluating AI technology packages for export, the Department should consider whether the hardware supports confidential computing at the accelerator level. For the highest-risk deployments, this technology can protect frontier AI model weights running in overseas data centers, even when local operators or state actors have physical or administrative access.

Confidential computing is a neglected domain in today’s ongoing chip wars, so I’m excited to see the work Elsie is doing here.

This is a wonderful mixed-media meditation on tacit knowledge — a core concept articulated by the philosopher and scientist Michael Polanyi. Many frontier labs and AI startups are working to make the tacit legible — how else can we build AGI? — but Polanyi’s concerns resist an easy solution. Is this a permanent bottleneck?

IV. AI 2027 Prediction Tracker

AI 2027 made a big splash last year, offering a detailed scenario illustrating the rise of unaligned AI. This site breaks down forecasts into more granular claims about the future then tracks their outcomes. Self-recommending, as it were.

V. How to Party Like an AI Researcher

This was one of my favorite pieces of on-the-ground reporting by the inimitable Jasmine Sun, who attended the flagship AI conference NeurIPS, which was hosted in San Diego this December.

An excerpt:

I jump into a 20-person session with the UVA economist Anton Korinek, who’s a bit of a radical in his discipline for entertaining the plausibility of hyperbolic GDP growth from transformative AI. He is soft-spoken, angular, and has a thick Austrian accent. At one point, stumbling through the beginning of a sentence, he says “I’m starting to run into nonsensical token generation in my reasoning chain.”

When the room splits into breakouts to talk redistribution, two men in mine challenge Korinek’s assumptions instead. He’s too conservative, they think: “I expect GDP to double every day.”

As far as I remember, these folks’ counter-argument went something like this: After superintelligence, we should expect that human wages will go to zero but capital to infinity. There will be a brief transition period where we have cognitive superintelligence but not advanced robotics—so we can all work as the AI’s meat-slaves—but after that, AI will recursively self-improve, tiling the galaxy in space factories, and rendering human labor useless. But this participant didn’t think this was a future to fear: “The vibe of this conference is oh no, what if humans lose control over the future, and I’m more like, oh no, what if the monkeys are still running things?”

“So how did you estimate one day as the GDP doubling rate?” I ask.

“Just vibes,” his friend replies. “Like, that’s how fast bacteria double.”

You should read the whole thing and subscribe to Jasmi.News.

[This one’s for Caleb and the rest of the town. Next season…]