Model Convo: Andy Masley

On Effective Altruism, Philosophy of Mind, and Aesthetic Experience

Welcome back to the Model Convo series — micro interviews with researchers in AI policy and related fields. If you know someone I should interview — maybe that’s you? — please email me. This week’s convo is with Andy Masley, an independent writer here on Substack.

What was your path into AI, and what are you working on now?

In college I double-majored in philosophy and physics. I had come in as a standard internet atheist, and was really interested in physicalism, the idea that there isn’t anything extra-physical affecting the mind or anything else about the world. Once even simple high school Newtonian physics really clicks for you, it’s hard not to think that way.

But there was this gigantic hole in my worldview: at a gut level it was hard not to think that consciousness and the mind seemed completely magical and distinct from the physical world. I had very strong Cartesian intuitions. I got lucky and had a professor my freshman year who was really excited about the philosopher C.S. Peirce. I ended up reading a lot of him and felt for the first time like I was actually getting real footing in philosophy of mind.

A central goal for Peirce is demystifying the mind’s abilities and pushing against Cartesian intuitions. The best place to start is “Questions Concerning Certain Faculties Claimed for Man,” and then probably his best essay “Some Consequences of Four Incapacities.” Those made it easier for me to think about the mind as a natural system, and led me to reading a lot of W.V.O. Quine and Daniel Dennett later on. Looking back, I think about just how lucky I am that 18-year-old me stumbled on this stuff. It seems like some of the absolute most relevant ideas I could’ve been engaging with at the time.

I also read Donna Haraway’s A Cyborg Manifesto in a philosophy of technology class — it’s a very fun critical theory paper — and became passively interested in continental philosophy of technology, though I know way less about it than my main focus areas in college. Turned out to be useful later.

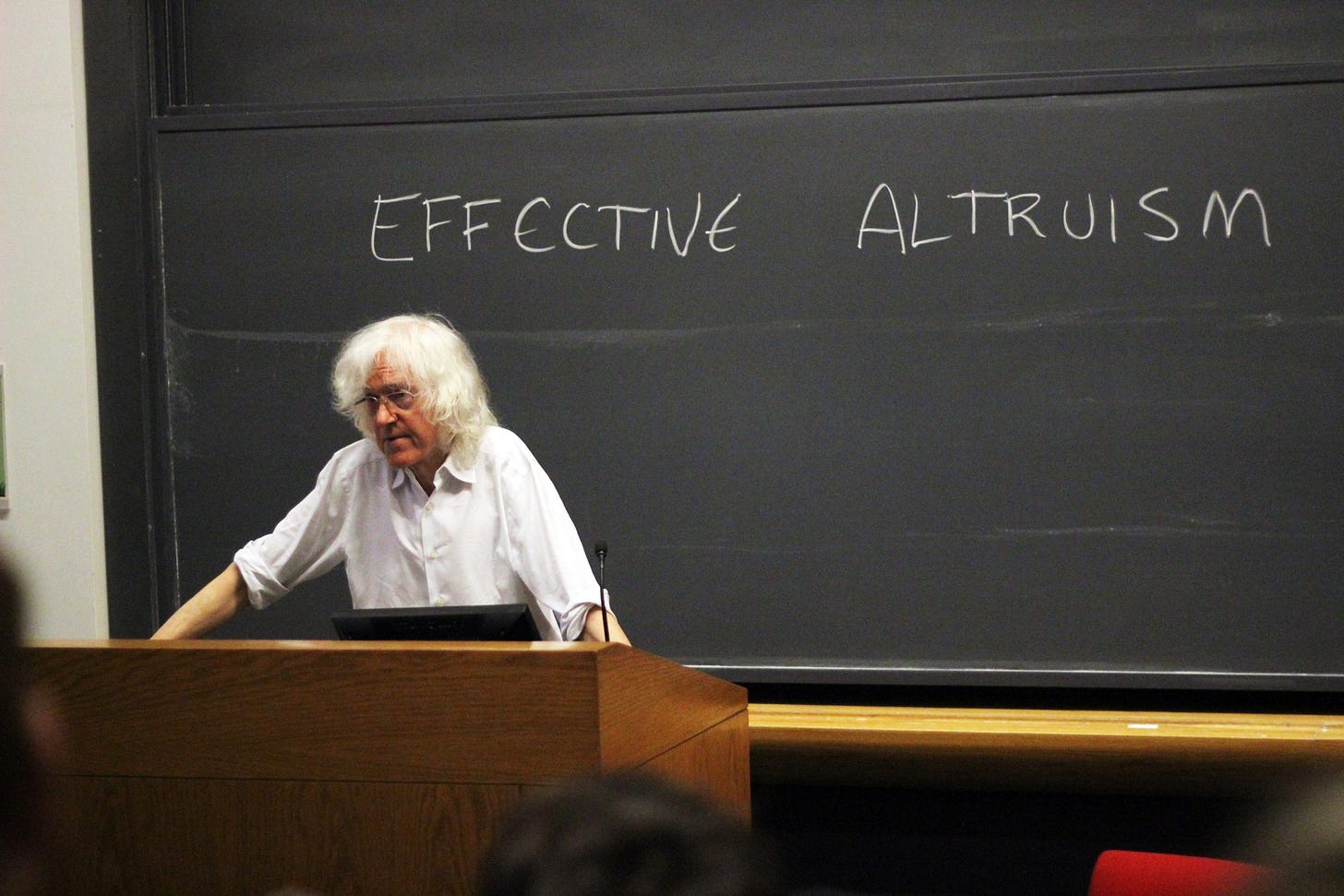

Separately, I read Reasons and Persons my junior year and that felt like an ideological nuclear bomb. I loved the way Derek Parfit thought and wrote. Reading more about him put effective altruism on my radar. I had known about Peter Singer and his basic arguments for effective giving for a few years, but got much more interested in EA arguments and thinking once I found the official movement.

I was basically sold, but at the time EA was (at least to my eyes) focused pretty exclusively on earning to give and running effective global health and animal welfare charities. I thought that was great but didn’t really see how I could contribute, so I didn’t actually reach out for another seven years. I just lurked on the forums, blogs, and podcasts, and donated to GiveWell and lab-grown meat.

Around 2015 I started seeing EA and rationalist arguments for AI x-risk. I remember reading reviews of Nick Bostrom’s Superintelligence and some summaries of the argument, and coming away with a deep sense of dread. Getting into philosophical naturalism about the mind had really primed me to take these arguments seriously.

If you think (as I did and do) that there’s nothing magical about the mind, talking about machines that could be way smarter than us sounds as reasonable as talking about machines that can lift way more weight than us, and the implications of that seemed like a mental third rail that I didn’t want to touch.

I felt like I’d go crazy if I thought about it too much. I decided I didn’t actually want to look more into it and figured, “Well at least I’m lucky that these arguments are so irrelevant to my life right now. It’s all so far off and there’s no way I can add value here anyway.” It’s really funny thinking about how that was only a decade ago.

I started listening to the 80,000 Hours podcast pretty early when it launched in 2017. That made a bunch of AI arguments feel much more accessible to me. Some were just so crazy fun. I remember the Hilary Greaves episode feeling like the most info-dense audio I had listened to at the time. Before that I didn’t see how to approach risks from superintelligence in a way that felt like serious thinking.

I’m very wary of thinking from first principles or doing anything that feels more like eschatology instead of sober prediction, and the conversations on 80k were just so fruitful and grounded in comparison that it gave me a lot to run with. 80k is I think perpetually underrated as a resource despite having a lot of success. My writing’s been influenced a lot by their problem profile pages. They’re very comprehensive and don’t talk down to the reader.

I eventually got involved in the EA DC city group in 2020 during the pandemic. I had never met anyone else especially into EA before that. I’ve always been kind of ideologically snippy and avoidant of surrounding myself with people who agree with me too much, but I was consistently blown away by the people I was meeting in the scene.

I eventually took on the role leading the EA DC city group, which mostly meant connecting people to each other and making the network legible to anyone who wanted to get involved, and representing EA when I could to the broader public.

This was a really incredible period of growth for me. And I felt and still feel like I wasn’t actually changing my views much based on social reward, something I worry about with any group of people into objectively weird ideas. For better or worse I find that most of my beliefs about big EA questions are the same as they were before I got involved. I’m pretty grateful I had so long to marinate in them.

In the last year I started to blog a lot more. I got a ton of traction on some early posts about AI and the environment and ran with it. I built up enough of an audience that people suggested I experiment with writing full-time, so I very recently stepped down from EA DC to do that. I want to experiment with getting across big ideas that have been important to me, and also do more deep dives on data centers, which is the thing that really built my audience to begin with. Almost everything I write will be focused on AI in one way or another.

What works of art have most shaped your views on AI?

I’m sure this one will be said a lot, but a theme of The Three-Body Problem is that within some local complex system you and/or your civilization can be doing everything right and succeeding on every metric. Society can grow and experience real progress for hundreds or thousands of years. But it can all be embedded in some larger system you barely understand where the rules work completely differently, and that can suddenly just upend everything.

The books are partly about characters trying to figure out the (often bleak) rules of these much larger alien systems, even though they may have lots of personal incentive to ignore them. I think this is just an obviously true fact about the world that’s very hard for us to keep in mind, because we evolved to operate in systems of social status that mostly reward treating the world like it’s going to stay constant.

I have a strong emotional inclination to be an upbeat techno-optimist about AI. I’m otherwise very excited about basically all tech progress that’s happened in my lifetime, but I have to step out of that and think seriously about how inventing intelligent machines could be radically different than all prior tech, and The Three-Body Problem headspace is a great way to approach that.

I also think a lot about how some of the most important, meaningful experiences of art I’ve had wouldn’t have been possible without very recent technology. I wouldn’t have found a lot of the music I loved as a teenager without the internet, or been able to take walks listening to it without an iPod or Walkman. These were some of the most important experiences I’ve had.

I think it’s very easy to view new tech as if it’s going to be very flat and cold and inert. We have a lot of nostalgia for the past and it’s hard to imagine deep specific experiences like that coming from stuff that’s invented after we’re thirty. For a lot of people hearing about the internet for the first time, I’d imagine they might’ve just thought of all the emails and wires and glowing screens, and wouldn’t have felt in their gut that the internet would enable all these new deeply personal meaningful experiences.

So, when something as consequential as AI is on the horizon, I try to think about all the ways it could make life more meaningful and human for people in the way the internet did for me. There will be new things like walking around late at night listening to ‘90s indie in suburban Massachusetts, something that feels like a fundamental part of reality to me but is actually just so recent and contingent.

What’s your most contrarian take on AI?

Depends on who I’m talking to. I’ve built an audience by arguing that the environmental impacts of AI have been wildly overblown, and in a lot of spaces that’s seen as very contrarian, even though I think “using a computer program isn’t in the same ballpark as driving a car” should be just wildly obvious.

Within EA circles, I’m still pretty open to arguments for much longer timelines or that current systems won’t scale to AGI, although I’m just wildly uncertain.

After a decade of reading about it, I have almost no really confident takes on AI risk and AGI beyond, “Hmm, seems like a big deal,” and that’s feeling more and more uncomfortable every day. I’m hoping this is my year to develop a solid inside view.

What are you reading, watching, or listening to now?

Reading: The Search for Modern China. Considered one of the best general histories, it’s really fun.

Watching: I love Les Blank’s documentaries about New Orleans, like Always for Pleasure. I was watching them a lot during the pandemic. Gonna be working through the ones I haven’t seen.

Listening: Been a busy month for me so I’m not listening to much new or complex music. The Japancakes cover of Loveless has been really fun and surprisingly good for focus.

Go-to emerging tech track?

Half-jokingly, anything from the Akira soundtrack, especially “Kaneda’s Theme.” Or something very chaotic and overwhelming but certain of itself, like “Miss Fortune” by Faust.

Great interview! Very interesting to hear about the journey, here.